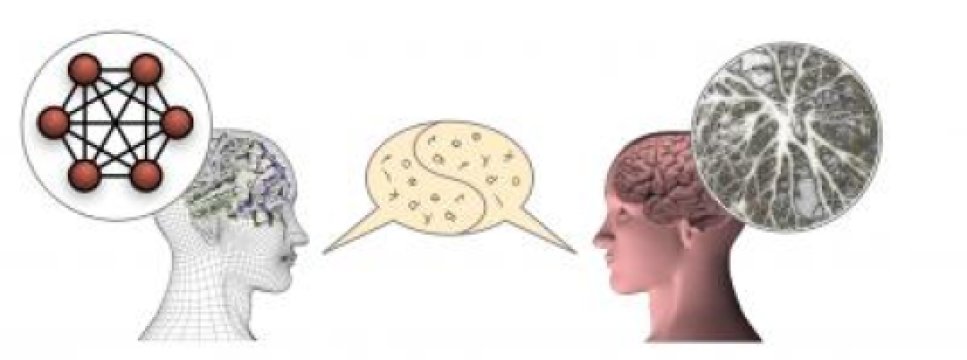

A group of researchers from the University of Sassari (Italy) and the University of Plymouth (UK) has developed a cognitive model, made up of two million interconnected artificial neurons, able to learn to communicate using human language starting from a state of "tabula rasa," only through communication with a human interlocutor. The model is called ANNABELL (Artificial Neural Network with Adaptive Behavior Exploited for Language Learning) and it is described in an article published in the international scientific journal PLOS ONE. This research sheds light on the neural processes that underlie the development of language.

How does our brain develop the ability to perform complex cognitive functions, such as those needed for language and reasoning? This is a question that certainly we are all asking ourselves, to which the researchers are not yet able to give a complete answer. We know that in the human brain there are about one hundred billion neurons that communicate by means of electrical signals. We learned a lot about the mechanisms of production and transmission of electrical signals among neurons. There are also experimental techniques, such as functional magnetic resonance imaging, which allow us to understand which parts of the brain are most active when we are involved in different cognitive activities. But a detailed knowledge of how a single neuron works and what are the functions of the various parts of the brain is not enough to give an answer to the initial question.

The ANNABELL model appears to confirm this perspective. ANNABELL does not have pre-coded language knowledge; it learns only through communication with a human interlocutor, thanks to two fundamental mechanisms, which are also present in the biological brain: synaptic plasticity and neural gating. The cognitive model has been validated using a database of about 1500 input sentences, based on literature on early language development, and has responded by producing a total of about 500 sentences in output, containing nouns, verbs, adjectives, pronouns, and other word classes, demonstrating the ability to express a wide range of capabilities in human language processing.